@DLeonhardt

@OppInsights

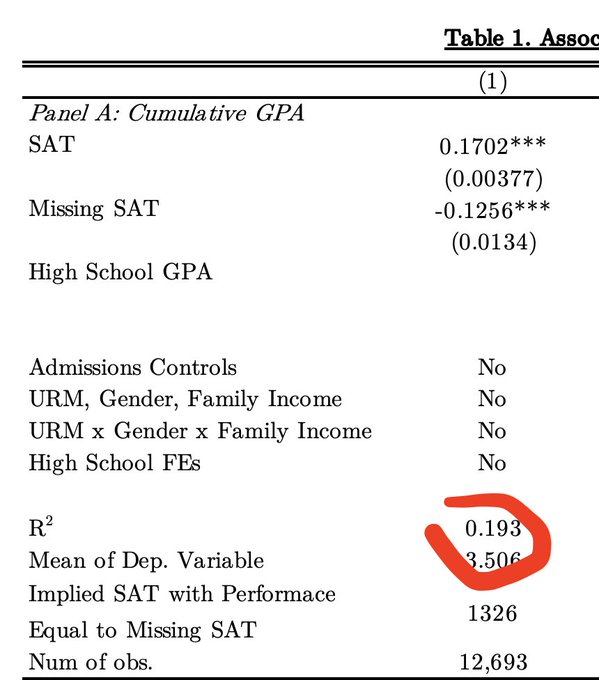

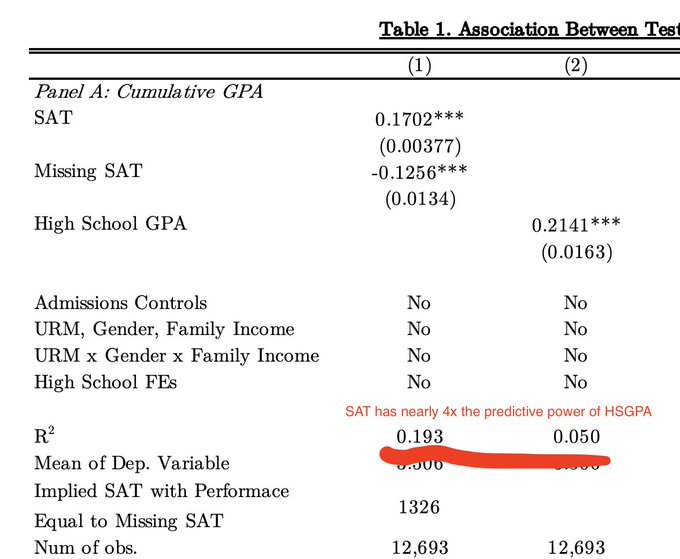

We can, however, make inferences based on the

@OppInsights

technical appendix. Lesson one: predicting with BOTH SAT and HS GPA gives you 4x more predictive power that using HS GPA alone. So far so good!

3

5

66

Replies

Much respect for

@DLeonhardt

's journalism, but this thread is going on my quant syllabus as an example of how to mislead (not lie! but mislead) with statistics. SAT/ACT are way less of a deal than is implied here.

Strap in, because we're going into the weeds on this thread...

30

191

501

@DLeonhardt

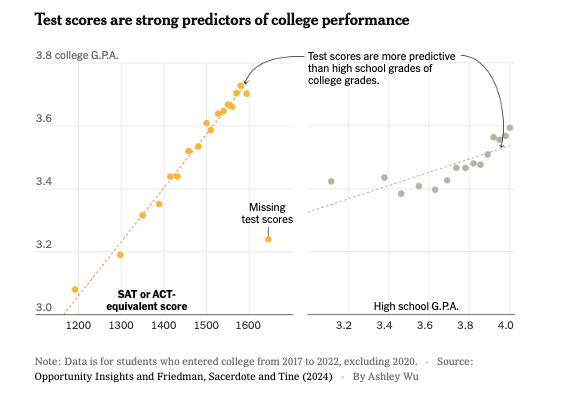

Here David says “the relationship between test scores and college grades… is strong.” And golly what a steep line!

The problem is that the strength of the relationship isn’t measured by the steepness of the line, but how closely data points hew to it.

5

8

78

@DLeonhardt

Using statistical jargon, the correct measure of the strength-and predictive value-of the relationship is r-squared (degree to which data points hew to the line), not the regression coefficient (slope of the line).

6

4

76

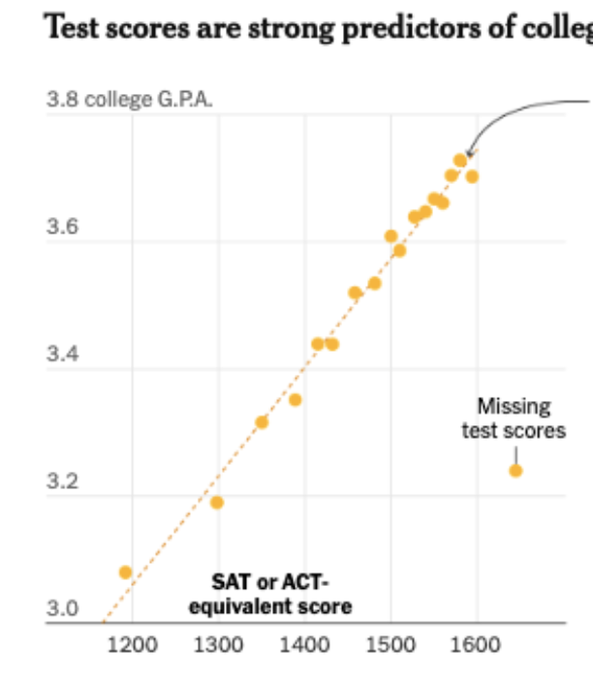

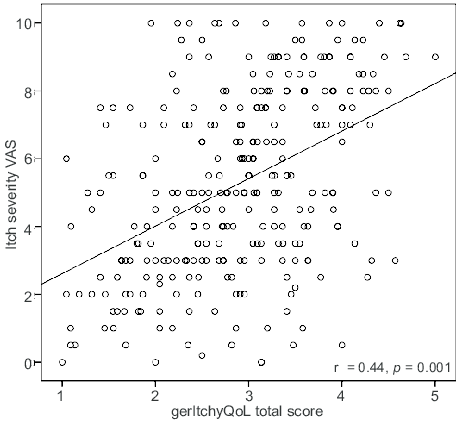

@DLeonhardt

You might look at this graph and think “those data points are awfully close.” But they aren’t the real data points. They're binned means.

Given information in the

@OppInsights

technical appendix, the actual relationship looks more like the second image here (r=0.44).

4

27

180

@DLeonhardt

@OppInsights

According to the

@OppInsights

analysis standardized test scores explain less than 1/5 of the variation in college GPA. So over 80% of the variation in college grades exists among students with similar test scores. Does that sound like strong prediction to you?

16

15

137

@DLeonhardt

@OppInsights

Problem

#2

: a strawman. Evidence is presented to answer the question “if you used only SAT or only HS GPA to predict college grades, which would be better?” And by either steepness of line or r-squared, SAT does better. However…

1

2

69

@DLeonhardt

@OppInsights

That question is completely irrelevant to our purpose. The real question is, “compared to a prediction that ignores SAT scores, how much better is a prediction that uses them?” And neither

@DLeonhardt

nor

@OppInsights

present the analysis required to address that question.

4

8

112

@DLeonhardt

@OppInsights

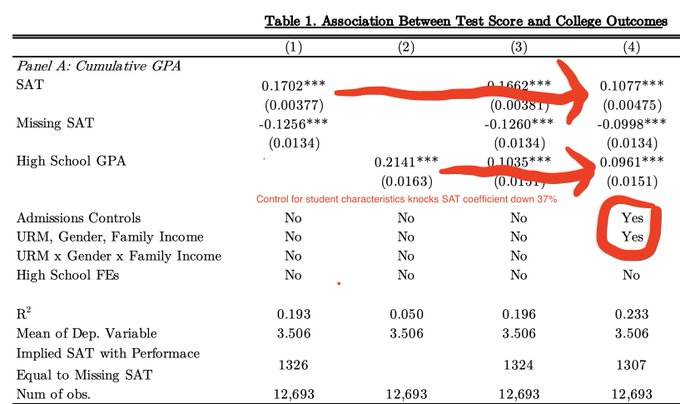

But admissions offices have access to way more than these two measures. When we build a model that includes some of them--gender, whether a student is a legacy, athlete, first gen, under-represented minority, early decision, etc.--a funny thing happens to SAT predictive power.

4

4

72

@DLeonhardt

@OppInsights

It gets cut. A lot. This gets at the question “who the heck are those students with sub-1200 SATs at elite colleges anyway?” Well, it appears a lot of them are legacies, athletes, etc. We don’t need a test score to know that some of these students are lesser academic performers.

11

12

106

@DLeonhardt

@OppInsights

With these controls, the predictive power of the SAT is cut more than a third. The predictive power of HS GPA barely budges. Our explanatory power, measured by R-squared, is higher than it was. But it goes up a LOT more if we control for one more factor…

6

3

74

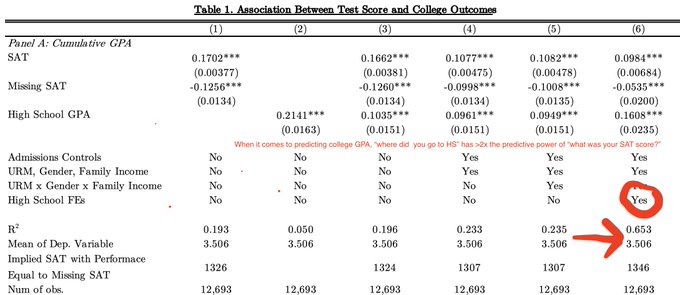

@DLeonhardt

@OppInsights

Where did the applicant go to HS?

This information, by itself, has twice the predictive power of SAT scores. Students from “elite” high schools get better grades than lesser-resourced ones, controlling for all the other student characteristics in the earlier analysis.

31

33

160

@DLeonhardt

@OppInsights

While

@OppInsights

never shows us the regression we need – the one that has everything BUT the SAT scores – we can infer that, on the margin, test scores are definitely improving predictive power. But the clear majority of potential predictive power comes from other factors.

4

8

85

@DLeonhardt

@OppInsights

So where

@DLeonhardt

’s thread might lead you to believe elite colleges are forced to take shots in the dark when deprived of test scores, admitting students whom they have no idea about, in reality they retain the clear majority of their predictive power.

6

7

99

@DLeonhardt

@OppInsights

And sure, the SAT can occasionally promote equity. But if it’s an equity agenda you want to pursue, standardized tests can’t hold a candle to:

1) Ending legacy admissions

2) Ending donor child admissions

3) Ending most athlete recruitment

“When you don’t have test scores, the students who suffer most are those with high grades at relatively unknown high schools, the kind that rarely send kids to the Ivy League,” says

@ProfDavidDeming

, a Harvard economist. “The SAT is their lifeline.”

9

42

192

14

59

298

@DLeonhardt

@OppInsights

There's a deeper question of whether elite colleges should lavish their ample resources on "sure things," the students who will do fine in college and life with or without those resources, or the students on the margin, the ones for whom resources could be transformational.

12

21

148

@DLeonhardt

@OppInsights

Good predictions are key to admissions strategy in either case, but if you think that colleges have just gotta have SAT scores to make decent predictions...

think twice.

/end.

24

5

136

@JakeVigdor

@DLeonhardt

@OppInsights

There is also substantial content in the application itself. The essays are not trivial.

0

0

0

@JakeVigdor

@DLeonhardt

@OppInsights

Not sure if this is a big issue, but I am surprise what this analysis, and the NYT piece, keeps focusing on GPA in levels. I would assume that there is much more information in the GPA ranking within school

0

0

0